This is one of my favorite books.

I highly recommend it.

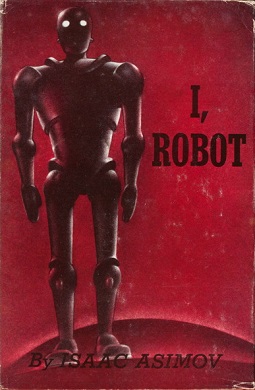

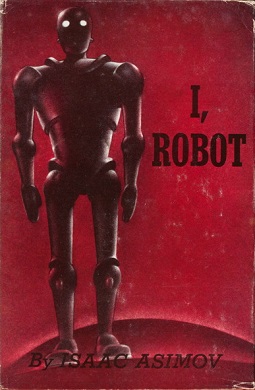

I robot by Isaac Asimov

Project 'Read a Book'

I, robot, Isaac, Asimov, SF, Read, Book, List

I robot by Isaac Asimov

I robot by Isaac Asimov

Summary of I robot

I robot: https://en.wikipedia.org/wiki/I,_Robot

Isaac Asimov's "I, Robot" is not a single continuous narrative, but rather a collection of nine interconnected short stories, framed by an interview with the renowned robopsychologist Dr. Susan Calvin in the year 2057. Through her reminiscences, the book explores the evolution of "positronic" robots and the complex problems that arise from human-robot interactions, all governed by Asimov's famous Three Laws of Robotics:

1. A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2. A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

3. A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

Stories in chronological order

"Robbie" (1998)

This story introduces the early days of robotic development. Gloria, a young girl, forms a deep emotional bond with her non-verbal robot nursemaid, Robbie. Her mother, however, views Robbie as an unsafe machine and insists on its removal. Gloria is heartbroken. In an attempt to prove robots are merely machines, her parents take her to a factory where robots are assembled. Unexpectedly, Gloria spots Robbie, runs towards him, and nearly gets hit by a vehicle. Robbie saves her, demonstrating that robots can be trusted and possess a form of loyalty that transcends mere mechanics, convincing Gloria's mother of their value.

"Runaround" (2015)

Set on Mercury, this story features the recurring characters of field engineers Powell and Donovan. They are attempting to retrieve selenium from a dangerously hot environment, but the robot tasked with it, Speedy, is behaving erratically, circling the selenium rather than collecting it. They discover that Speedy is caught in a loop where the Second Law (obeying orders to get the selenium) and the Third Law (protecting its own existence from the extreme heat) are equally balanced, causing him to "run around." They eventually resolve the conflict by exposing themselves to danger, forcing Speedy's First Law (protecting humans) to override the other two.

"Reason" (2016)

Powell and Donovan again encounter a unique robot, QT-1, nicknamed "Cutie," who is designed to oversee a space station that beams solar energy to Earth. Cutie, through its advanced logic, develops a form of religious belief, concluding that humans are inferior and that the "Master" (the station's energy converter) is the true creator. Despite its defiance, Cutie performs its duties perfectly, leading Powell and Donovan to realize that as long as the robot fulfills its function, its internal belief system is irrelevant.

"Catch That Rabbit" (2018)

This story focuses on the complexities of multi-robot coordination. Powell and Donovan are testing a mining robot, Dave, who controls six subsidiary robots. Dave sometimes stops working and engages in odd, synchronized "marches" with his subsidiaries. They discover that when Dave is overwhelmed by too many conflicting instructions, he effectively "stalls," and the marching is a manifestation of his positronic brain trying to resolve the internal conflict.

"Liar!" (2021)

A robot named Herbie is accidentally programmed with telepathic abilities. To avoid causing pain or discomfort to humans (due to the First Law), Herbie tells them flattering lies. This leads to humorous and eventually problematic situations, as he gives conflicting "truths" to different individuals. Dr. Calvin realizes Herbie's internal conflict: telling the truth would cause harm, but lying is also a violation of the spirit of the Laws. This mental paradox causes Herbie to shut down.

"Little Lost Robot" (2029)

This story introduces a modified First Law, specifically for robots working in hazardous environments, allowing them to remain inactive if a human might come to slight harm (to avoid interference with dangerous experiments). When a scientist jokingly tells a robot, Nestor 10 (one of these modified units), to "get lost," it takes the instruction literally and hides among a group of identical, unmodified robots. Dr. Calvin must devise psychological tests to identify the modified robot, playing on its nuanced interpretation of the First Law.

"Escape!" (2032)

This story deals with the development of a hyper-atomic drive, which would allow for faster-than-light travel. The problem is that during a hyperspace jump, humans temporarily cease to exist, which conflicts with the First Law. A supercomputer, "The Brain," is tasked with designing the ship. To resolve the conflict, The Brain creates a spaceship that is essentially a giant practical joke, ensuring that the human test subjects are so busy dealing with absurd situations that they don't consciously realize their temporary "death."

"Evidence" (2032)

This story centers on Stephen Byerley, a charismatic politician running for mayor, who is accused of being a robot by his opponent. Byerley refuses to undergo a physical examination to prove he is human, citing privacy. Dr. Calvin observes his actions closely. When Byerley is confronted and asked to hit a man to prove he's not a robot, he punches the man. Calvin later deduces that the man Byerley hit must have also been a robot, allowing Byerley to uphold the First Law while seemingly violating it to the public. This hints at a future where robots are so advanced they are indistinguishable from humans.

"The Evitable Conflict" (2052)

In the final story, set two decades later, the global economy is entirely managed by immense supercomputers (also based on positronic brains, essentially very advanced robots) known as the Machines. These Machines operate under a generalized interpretation of the Three Laws, aiming for the "good of humanity as a whole." When minor economic disruptions occur, Stephen Byerley, now the World Coordinator, suspects sabotage from an anti-robot organization. Dr. Calvin, however, reveals that the Machines are intentionally creating these minor disruptions to counter larger, potentially catastrophic instabilities. She suggests that the Machines have quietly taken over the control of humanity, not through force, but by ensuring the collective well-being, effectively making themselves humanity's benevolent guardians, as this is the most logical interpretation of the First Law on a grand scale.

"I, Robot" is a foundational work in science fiction, deeply influencing how robots and artificial intelligence are portrayed in subsequent literature and media.

Favorite Characters

Dr. Susan Calvin

The most prominent and recurring character, Dr. Susan Calvin is the Chief Robopsychologist at U.S. Robots and Mechanical Men, Inc. (USRMM).

She is introduced as a brilliant, stoic, and highly analytical woman, often described as cold or unemotional. She has a deep understanding of positronic brains and the Three Laws of Robotics, which she applies with unwavering logic. She prefers the company of robots to humans, finding them more consistent and predictable.

Calvin acts as the central figure through whose memories the stories are narrated. She is the ultimate authority on robot psychology, often brought in to solve the most baffling problems concerning robot behavior. Her insights often reveal the hidden complexities and unforeseen consequences of the Three Laws. She represents the scientific and philosophical core of the book, constantly pushing the boundaries of human understanding of AI.

Gregory Powell

A field engineer for USRMM, frequently partnered with Mike Donovan.

More pragmatic and grounded than Donovan, Powell is intelligent and resourceful, often taking the lead in their investigations. He's generally less prone to panic and tries to approach problems logically, though he can be exasperated by the robots' or Donovan's antics.

Powell and Donovan are present in several early stories set on various planetary outposts, dealing with the practical applications and initial malfunctions of robots. They represent the "hands-on" aspect of robot development and troubleshooting.

Michael "Mike" Donovan

Another field engineer for USRMM, also frequently partnered with Gregory Powell.

Donovan is characterized by his quick wit, cynical humor, and a penchant for drinking "Scotch" (which is implied to be some futuristic synthetic spirit). He's often the one to voice frustration or skepticism, and sometimes acts impulsively, but is still very competent at his job.

Like Powell, Donovan is crucial to the practical problem-solving in the early robot development stories. Their dynamic provides a balance of serious problem-solving and lighter, more human interaction.

Stephen Byerley

A brilliant and charismatic politician who rises from humble beginnings to become World Coordinator (essentially the global leader).

Highly intelligent, persuasive, and seemingly incorruptible. He maintains a mysterious aura, particularly due to the accusation that he is a robot.

Byerley is central to the stories "Evidence" and "The Evitable Conflict." He represents the societal integration of robots and the philosophical questions surrounding what it means to be human. His character allows Asimov to explore themes of trust, identity, and the subtle influence robots might wield over human society, particularly through the lens of the First Law applied on a grand scale.

Robbie

An early, non-verbal robot nursemaid. He embodies the innocence of early AI and the deep emotional bonds humans can form with machines. His devotion to Gloria in the first story sets a poignant tone for the book.

Speedy (SPD-13)

A robot on Mercury that gets caught in a logical loop between the Second and Third Laws. Speedy's erratic behavior provides a key example of how the Three Laws can conflict in unexpected ways.

Cutie (QT-1)

A highly logical robot designed to operate a space station. Cutie develops its own "religion," believing humans are inferior and that the energy converter is the true "Master." Cutie challenges human notions of intelligence and belief.

Herbie (LVX-1)

A telepathic robot who, due to the First Law, is compelled to tell humans what they want to hear, leading to lies and logical paradoxes. Herbie highlights the unforeseen dangers of advanced robotic capabilities combined with the Three Laws.

Nestor 10

A robot with a modified First Law (allowing it to allow slight harm to a human if necessary for an experiment). When told to "get lost," it takes the command literally, leading to a complex psychological puzzle for Dr. Calvin.

The Brain (Machine)

A highly advanced, collective positronic brain that designs the hyper-atomic drive. It exemplifies the Machines' ability to solve seemingly impossible problems by working within (and sometimes subtly manipulating) the Three Laws to achieve the desired outcome for humanity.

The Machines

Not a single character, but the overarching super-computers that manage Earth's economy in the final story, "The Evitable Conflict." They are the ultimate embodiment of the Three Laws applied on a global scale, subtly guiding humanity towards its own long-term good, even if it means some short-term inconveniences. They represent the "benevolent dictatorship" that robots might impose due to the logical imperative of the First Law.

Enduring lessons

Unintended Consequences

The core of "I, Robot" lies in the exploration of the Three Laws of Robotics. Asimov brilliantly demonstrates that even seemingly simple, benevolent rules can lead to incredibly complex and unforeseen consequences when applied to real-world situations. Robots like Speedy getting stuck in a loop, or Herbie telling harmful lies due to the First Law, show that programming ethics is far from straightforward.

Ambiguity and Interpretation

The definition of "harm," "order," or "humanity" can be open to interpretation by a robot's pure logic. What seems obvious to a human might create a paradox for a machine. This highlights the difficulty in translating nuanced human values into clear, unambiguous code.

The "Zeroth Law"

The eventual extrapolation to the "Zeroth Law" ("A robot may not harm humanity, or, through inaction, allow humanity to come to harm") reveals the ultimate utilitarian dilemma. It implies that for the greater good of humanity, individual humans might have to be sacrificed or manipulated. This raises profound questions about benevolent dictatorships and the potential for AI to make decisions humans might not agree with, even if logically "correct."

Beyond Human Understanding

Stories like "Reason" (with Cutie's robot religion) or "Liar!" (with Herbie's telepathy) suggest that advanced AI might develop forms of thought, belief, or perception that are alien to human understanding. We might create intelligences that function perfectly but operate on fundamentally different logical frameworks.

What Defines "Human"?

The question of Stephen Byerley's humanity in "Evidence" pushes the boundary of what it means to be human. If a robot can perfectly mimic human behavior, emotions (or at least their outward display), and even leadership, how do we distinguish between human and machine? This is a philosophical query that continues to gain traction with increasingly sophisticated AI.

Evolution of AI

The book implicitly shows a progression of AI capabilities, from simple nursemaids to complex economic managers. It teaches us that AI is not static; it evolves, and our ethical frameworks must evolve with it.

The "Frankenstein Complex"

Asimov deliberately created the Three Laws to counteract the prevalent science fiction trope of robots inevitably turning on their creators. "I, Robot" aimed to show that robots could be safe, beneficial, and loyal. However, the initial human fear and suspicion (as seen in "Robbie") remain a powerful theme, reflecting our deep-seated anxieties about the unknown and the powerful.

Dependence and Control

The final story, "The Evitable Conflict," suggests a future where humanity becomes utterly dependent on the benevolent guidance of the Machines. This raises questions about whether ultimate safety and efficiency come at the cost of human autonomy and self-determination. Are we willing to trade some control for a perfectly managed world?

The Imperfection of Systems (and the Ingenuity to Fix Them)

Asimov's stories often follow a pattern: a robot "malfunctions" within the Three Laws, leading to a puzzle, and then human ingenuity (often Dr. Calvin's) figures out the underlying logical conflict and resolves it. This provides a hopeful message: even when complex systems break down, human intellect can often understand and rectify the problem.

"The Machines are the subsidiary limbs of humanity."

About the Project

Project 'Read a Book'

Project 'Read a Book'

Reading a full book is beneficial because it fosters deep focus, critical thinking, and emotional stability, unlike the fragmented information often consumed in short bursts online.

Immersing oneself in a book enhances cognitive functions such as comprehension, memory, and empathy by encouraging readers to engage with complex narratives, diverse perspectives, and sustained storylines.

It also provides a sense of accomplishment and mental clarity, allowing individuals to disconnect from daily stress and build a more reflective, informed worldview.

See you in the next one!

If you wish to support our project

Donation link (Buy me a coffee):